You've got a YouTube channel uploading videos in a regular cadence. When a new video is published, I want to cross-post it to other social media channels that I'm running. What could be the best way to do so? There are hundreds of commercial tools on the market for online content marketing. There are hundreds of companies to help you, and those companies have their proprietary solutions for it. It could be the best way to make use of those tools or companies. However, with various reasons or circumstances, what if you need to build your own one? What if the existing tools don't fulfil your requirements? Then, it's a good time to build the solution by yourself, doesn't it?

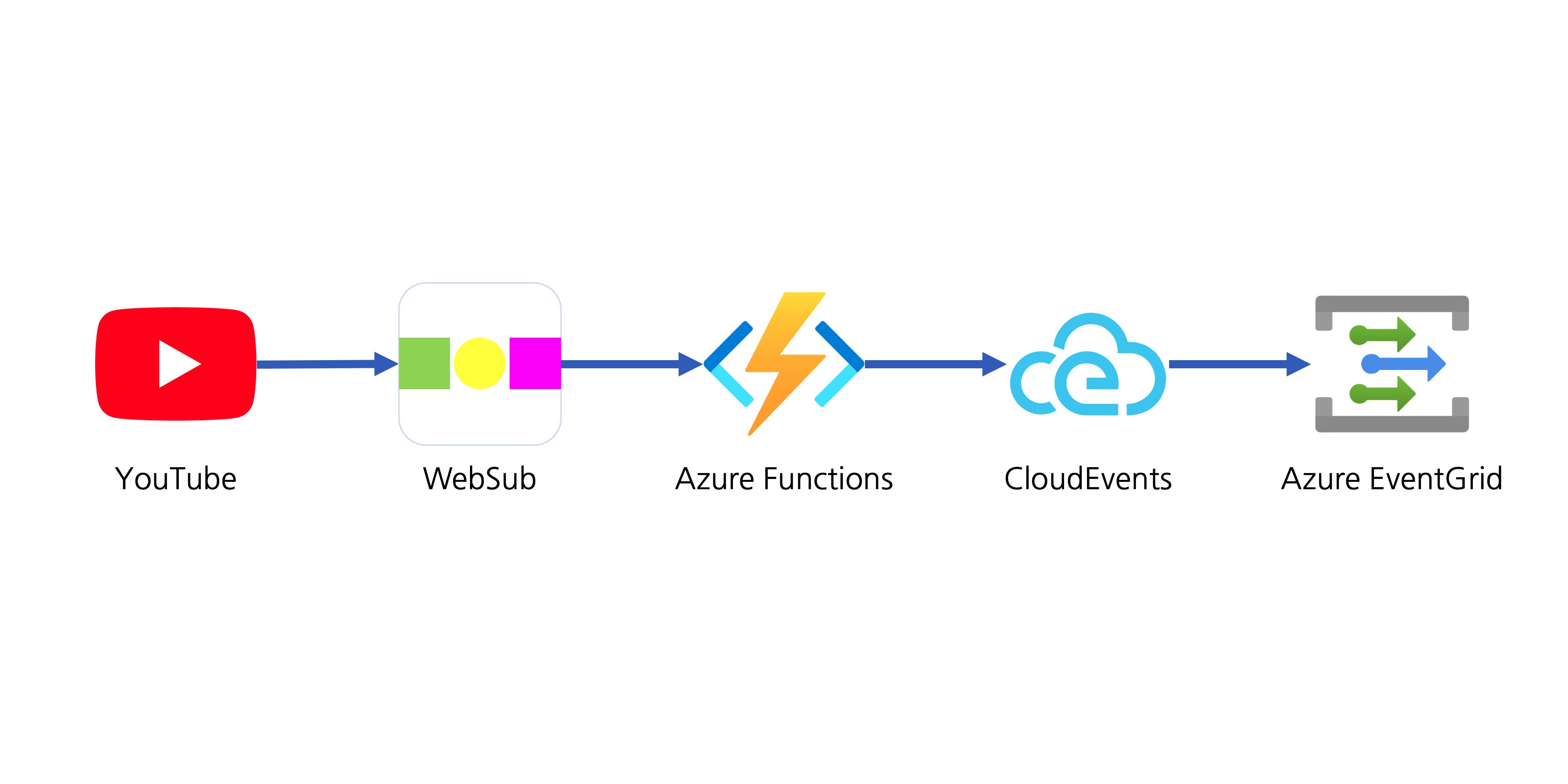

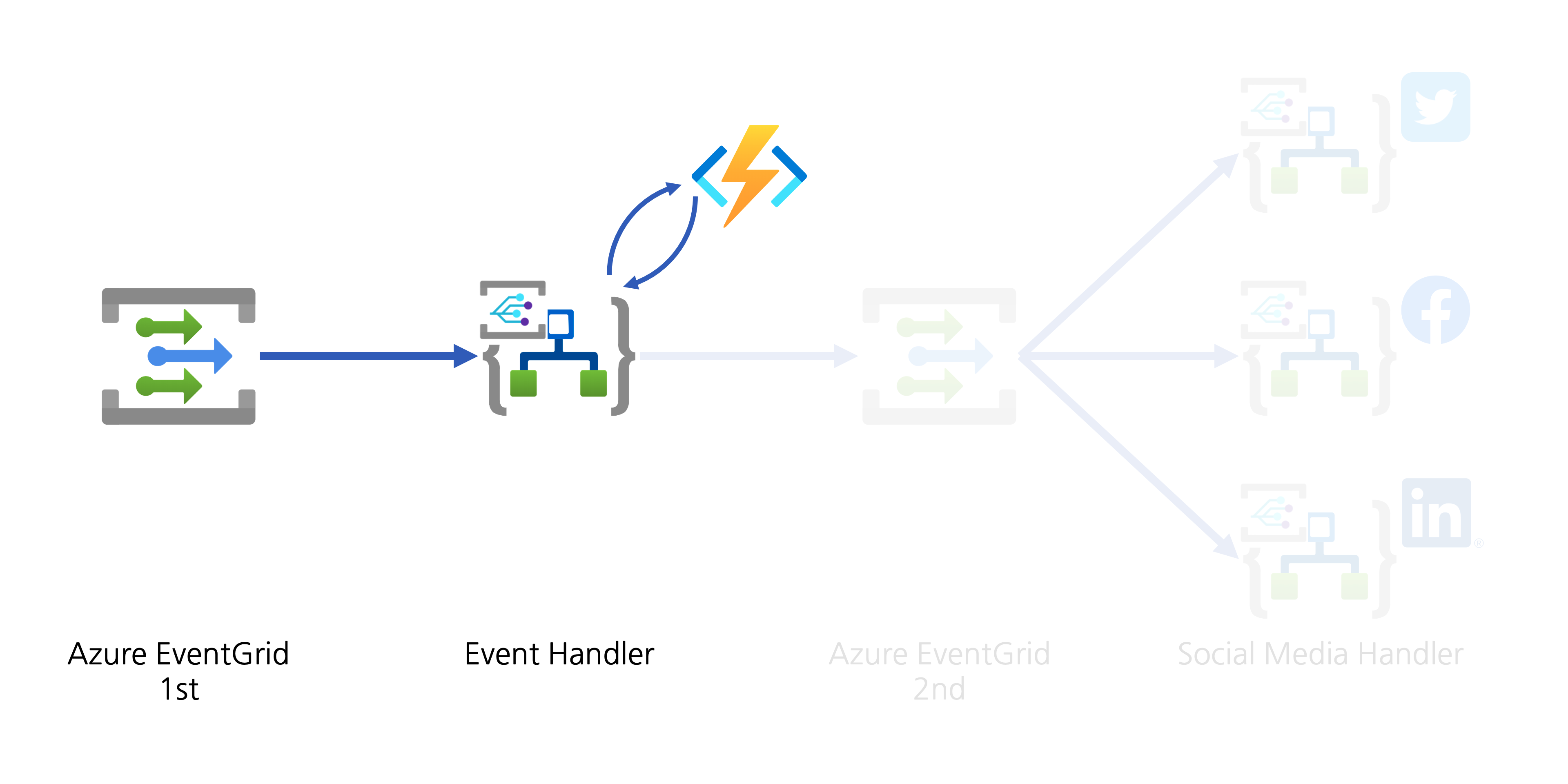

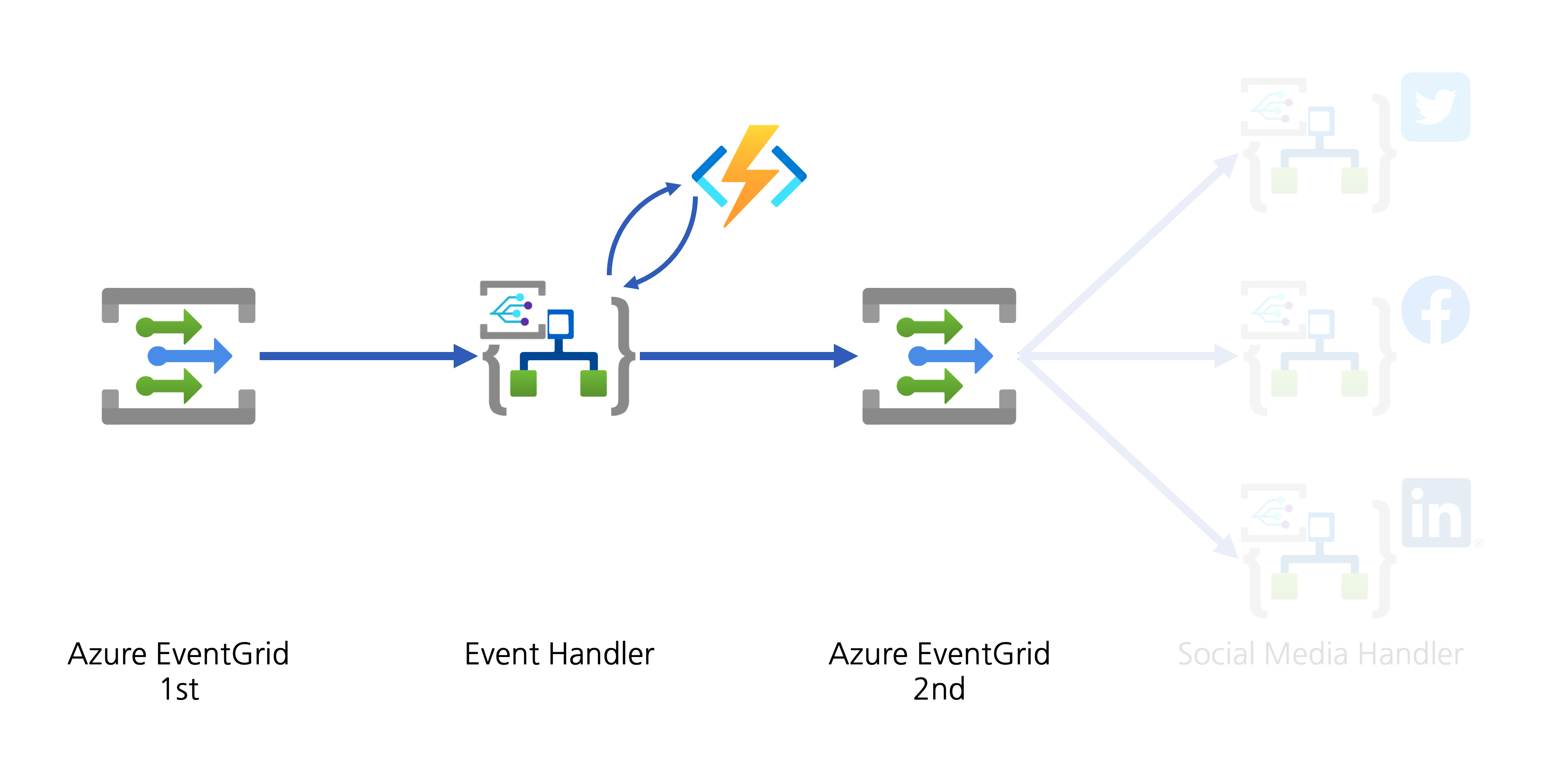

This post will discuss an end-to-end workflow story, from YouTube video update to other social media exposure. For the workflow, let's use Azure serverless services like Azure EventGrid, Azure Functions and Azure Logic Apps.

If you like to see the source codes of the solution, find this GitHub repository. It's completely open-source, and you can use it under your discretion with care.

Subscribing YouTube Notification Feed

YouTube uses a protocol called PubSubHubbub for its notification mechanism. It's now become a web standard called WebSub since 2018, after the first working draft in 2016.

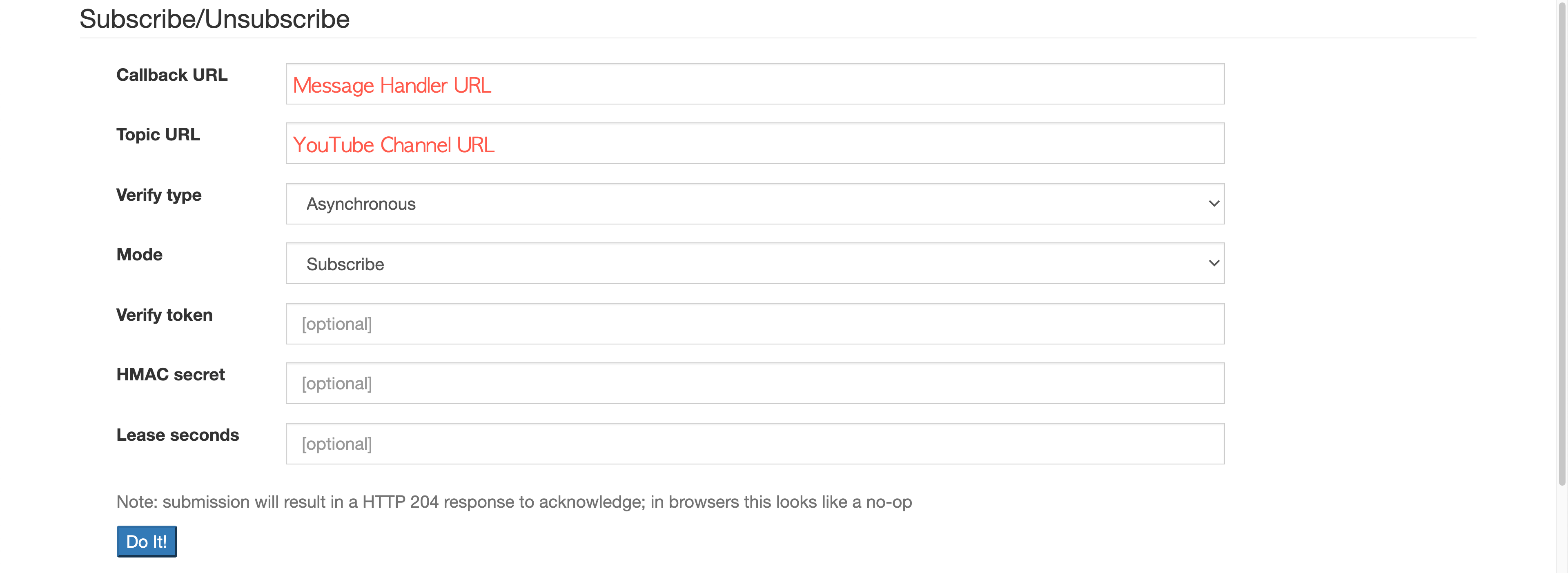

Google registers all YouTube channels to their WebSub Hub. Therefore, if you want to get the update notification from a specific channel, you can simply send a subscription request to the Hub. To subscribe, enter the message handler URL and YouTUbe channel URL and click the Do It! button. Too easy!

Please ensure that the subscription process is completed only after passing the message handler verification request.

Verifying WebSub Subscription Request

To verify the WebSub subscription request, the WebSub Hub sends an API call to the message handler. When it arrives, the handler MUST deal with the following.

-

The verification request sends a

GETrequest with the following query parameters:hub.mode: Thesubscribestring.hub.topic: The YouTube channel URL.hub.challenge: A random string generated by the WebSub Hub, used for the verification.hub.lease_seconds: The validation period in seconds from the time of the request. The request will be void unless the request is not verified within this period.

-

The response of the verification request MUST include the

hub.challengevalue to the response body, with the HTTP status code of 200:- If the response body includes anything other than the

hub.challengevalue, the WebSub Hub won't accept it as the valid response.

- If the response body includes anything other than the

Here's the verification request handling logic in the Azure Function method:

| [FunctionName("CallbackAsync")] | |

| public async Task<IActionResult> CallbackAsync( | |

| [HttpTrigger(AuthorizationLevel.Function, "GET", "POST", Route = "callback")] HttpRequest req, | |

| ILogger log) | |

| { | |

| if (HttpMethods.IsGet(req.Method)) | |

| { | |

| string challenge = req.Query["hub.challenge"]; | |

| var result = new ObjectResult(challenge) { StatusCode = 200 }; | |

| return result; | |

| } |

Once the message handler is verified, the WebSub Hub keeps sending the notification message to the handler whenever a new video update is made, from the subscribed channel.

Converting WebSub Notification Feed

As the mechanism of WebSub follows the same Publisher/Subscriber (Pub/Sub) pattern, it's not that new. The only difference of WebSub is the event data that makes use of the existing ATOM feed format. Therefore, as long as any subscriber understands the feed format, it should be OK. In other words, the subscriber has a strong dependency on the event data format the publisher sends. In the modern application environments, we recommend decoupling between the publisher and subscriber as much as we can, so that each can organically grow independently. In other words, the subscriber don't have to know the ATOM feed format. How can we make them decoupled, then? The event data format or message format needs to be canonicalised. Then, converting the canonical data into the subscriber-specific format should be done by the subscriber's end.

Therefore, we are going to use CloudEvents as the canonical data format. There are two steps for the conversion–1) canonicalisation and 2) domain-specific conversion. Let's have a look.

1. Canonicalisation: WebSub Feed ➡️ CloudEvents

The purpose of this step is to decouple between WebSub Hub and my application. The XML data delivered from the WebSub Hub is just wrapped with the CloudEvents format. When a new video is updated onto YouTube, it sends a notification to the WebSub Hub, which looks like the following:

| <feed xmlns:yt="http://www.youtube.com/xml/schemas/2015" xmlns="http://www.w3.org/2005/Atom"> | |

| <link rel="hub" href="https://pubsubhubbub.appspot.com"/> | |

| <link rel="self" href="https://www.youtube.com/xml/feeds/videos.xml?channel_id=[channel_id]"/> | |

| <title>YouTube video feed</title> | |

| <updated>2021-01-27T07:00:00.123456789+00:00</updated> | |

| <entry> | |

| <id>yt:video:[video_id]</id> | |

| <yt:videoId>[video_id]</yt:videoId> | |

| <yt:channelId>[channel_id]</yt:channelId> | |

| <title>hello world</title> | |

| <link rel="alternate" href="http://www.youtube.com/watch?v=<video_id>"/> | |

| <author> | |

| <name>My Channel</name> | |

| <uri>http://www.youtube.com/channel/[channel_id]</uri> | |

| </author> | |

| <published>2021-01-27T07:00:00+00:00</published> | |

| <updated>2021-01-27T07:00:00.123456789+00:00</updated> | |

| </entry> | |

| </feed> |

As the message handler takes this request through POST, it is stringified like this:

| var payload = default(string); | |

| using (var reader = new StreamReader(req.Body)) | |

| { | |

| payload = await reader.ReadToEndAsync().ConfigureAwait(false); | |

| } |

The request header also contains the following Link information:

| Link: <https://pubsubhubbub.appspot.com>; rel=hub, <https://www.youtube.com/xml/feeds/videos.xml?channel_id=[channel_id]>; rel=self |

As it includes the YouTube channel URL as the message source, we need to extract it.

| var headers = req.Headers.ToDictionary(p => p.Key, p => string.Join("|", p.Value)); | |

| var links = headers["Link"] | |

| .Split(new[] { "," }, StringSplitOptions.RemoveEmptyEntries) | |

| .Select(p => p.Trim().Split(new[] { ";" }, StringSplitOptions.RemoveEmptyEntries)) | |

| .ToDictionary(p => p.Last().Trim(), p => p.First().Trim().Replace("<", string.Empty).Replace(">", string.Empty)); | |

| var source = links["rel=self"]; |

Then, set the event type and content type like the following.

| var type = "com.youtube.video.published"; | |

| var contentType = "application/cloudevents+json"; |

As I mentioned in my previous post, at the time of this writing, the Azure EventGrid Binding for Azure Function currently has a limitation to support the CloudEvents format. Therefore, I should handle it manually:

| var @event = new CloudEvent(source, type, payload, contentType); | |

| var events = new List<CloudEvent>() { @event }; | |

| var topicEndpoint = new Uri("https://<eventgrid_name>.<location>-<random_number>.eventgrid.azure.net/api/events"); | |

| var credential = new AzureKeyCredential("eventgrid_topic_access_key"); | |

| var publisher = new EventGridPublisherClient(topicEndpoint, credential); | |

| var response = await publisher.SendEventsAsync(events).ConfigureAwait(false); | |

| return new StatusCodeResult(response.Status); | |

| } |

So far, the WebSub data is canonicalised with the CloudEvents format and sent to EventGrid. The canonicalised information looks like this:

| { | |

| "id": "c2e9b2d1-802c-429d-b772-046230a9261e", | |

| "source": "https://www.youtube.com/xml/feeds/videos.xml?channel_id=<channel_id>", | |

| "data": "<websub_xml_data>", | |

| "type": "com.youtube.video.published", | |

| "time": "2021-01-27T07:00:00.123456Z", | |

| "specversion": "1.0", | |

| "datacontenttype": "application/cloudevents+json", | |

| "traceparent": "00-37d33dfa0d909047b8215349776d7268-809f0432fbdfd94b-00" | |

| } |

Now, we have cut the dependency on the WebSub Hub.

Let's move onto the next step.

2. Domain-Specific Conversion: WebSub XML Data Manipulation

At this step, the XML data is actually converted into the format we're going to use for social media amplification.

The WebSub XML data only contains bare minimum information like the video ID and channel ID. Therefore, we need to call a YouTube API to get more details for social media amplification. An event handler should be registered to handle the published event data on Azure EventGrid. Like the WebSub subscription process, it also requires delivery authentication. One of the good things using Azure Logic Apps as the event handler is that it automatically does all the verification process internally. Therefore, we just use the Logic App to handle the event data.

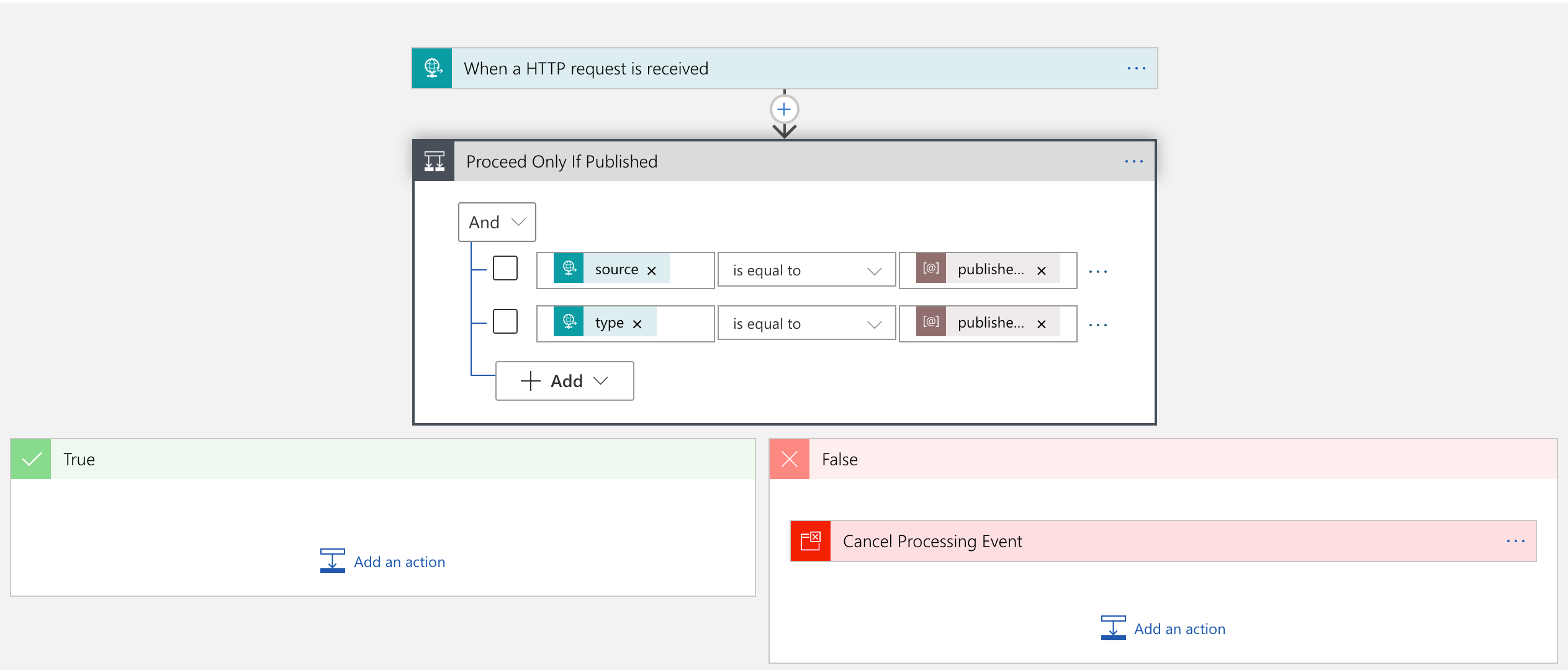

The Logic App handler's first action is to verify whether the event data is what I am looking for–it should meet my channel ID and event type of com.youtube.video.published. If either channel ID or the event type is different, this handler stops processing.

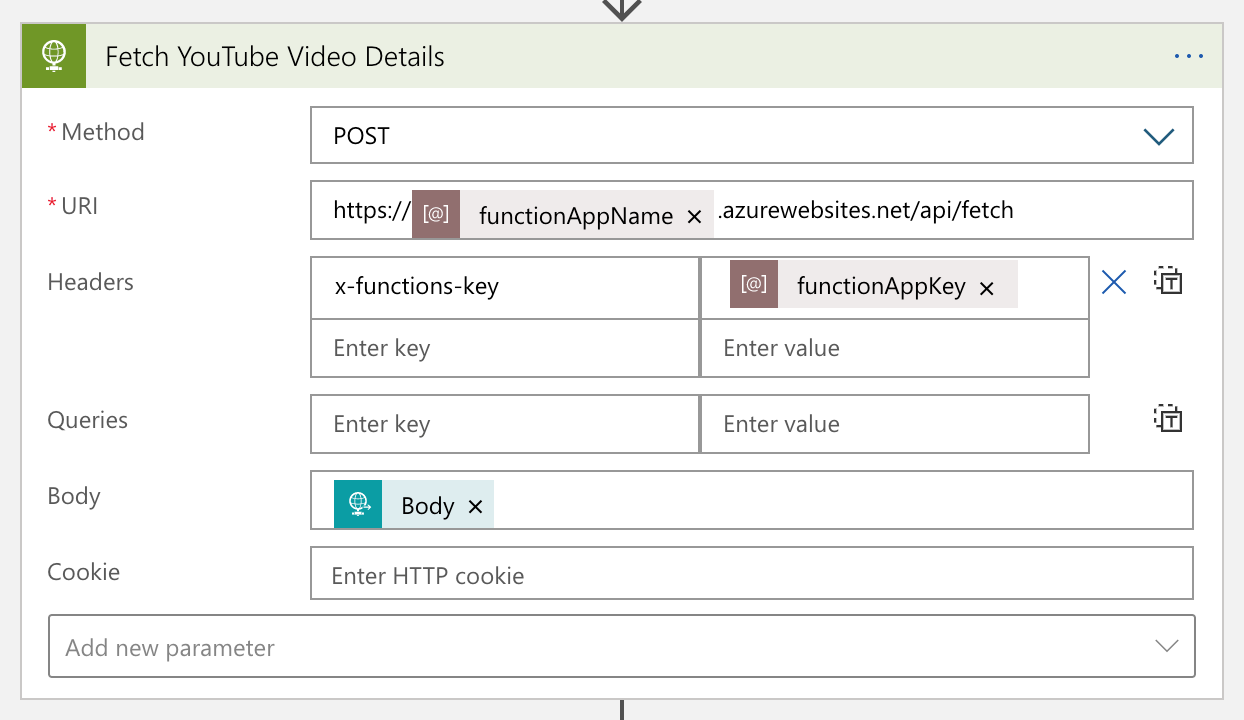

If the event data is what I am looking for, the handler passes it to Azure Functions for further manipulation.

The Azure Functions app calls the YouTube API to get more details of the video, manipulates them, and turns it back to Logic App. The converted data looks like:

| { | |

| "channelId": "<channel_id>", | |

| "videoId": "<video_id>", | |

| "title": "hello world", | |

| "description": "Lorem ipsum dolor sit amet, consectetur adipiscing elit. Duis malesuada.", | |

| "link": "https://www.youtube.com/watch?v=<video_id>", | |

| "thumbnailLink": "https://i.ytimg.com/vi/<video_id>/maxresdefault.jpg", | |

| "datePublished": "2021-01-27T07:00:00+00:00", | |

| "dateUpdated": "2021-01-27T07:00:00+00:00" | |

| } |

YouTube data has been massaged for our purpose.

Social Media Exposure

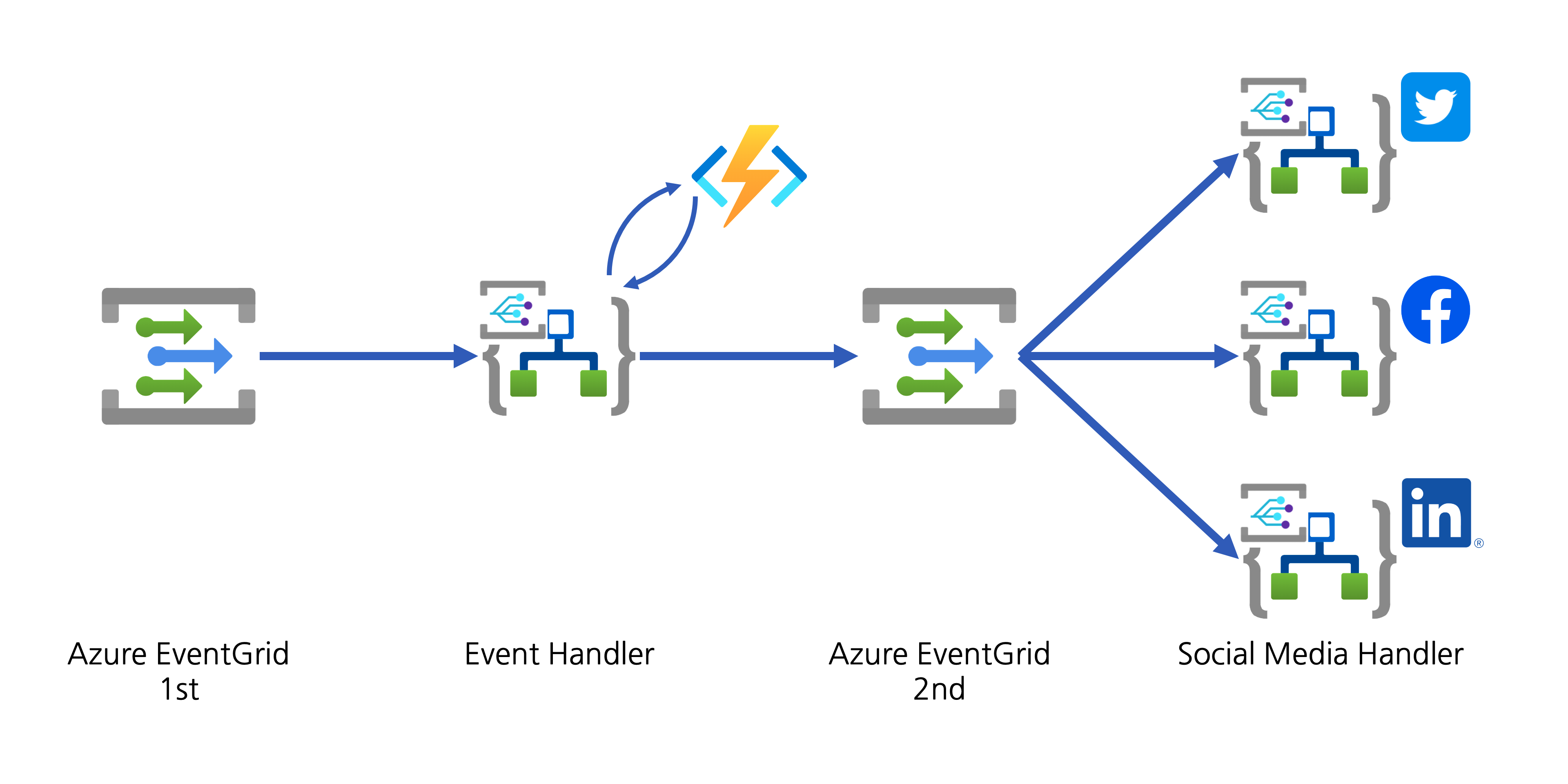

The event handler now needs to help spread the YouTube video update to the world through designated social media. There are two approaches:

- The event handler directly connects to APIs of individual social media, or

- The event handler publishes another event to Azure EventGrid for other event handlers takes care of social media amplification.

Although both approaches are valid, the first one has strong coupling between the handler and amplifiers. If you need to add a new amplifier or remove an existing one, the Logic App handler must be updated, which is less desirable, from the maintenance perspective. On the other hand, The second approach publishes another event containing the converted data. All social media amplifiers act as event handlers, and they are all decoupled. I chose the second one.

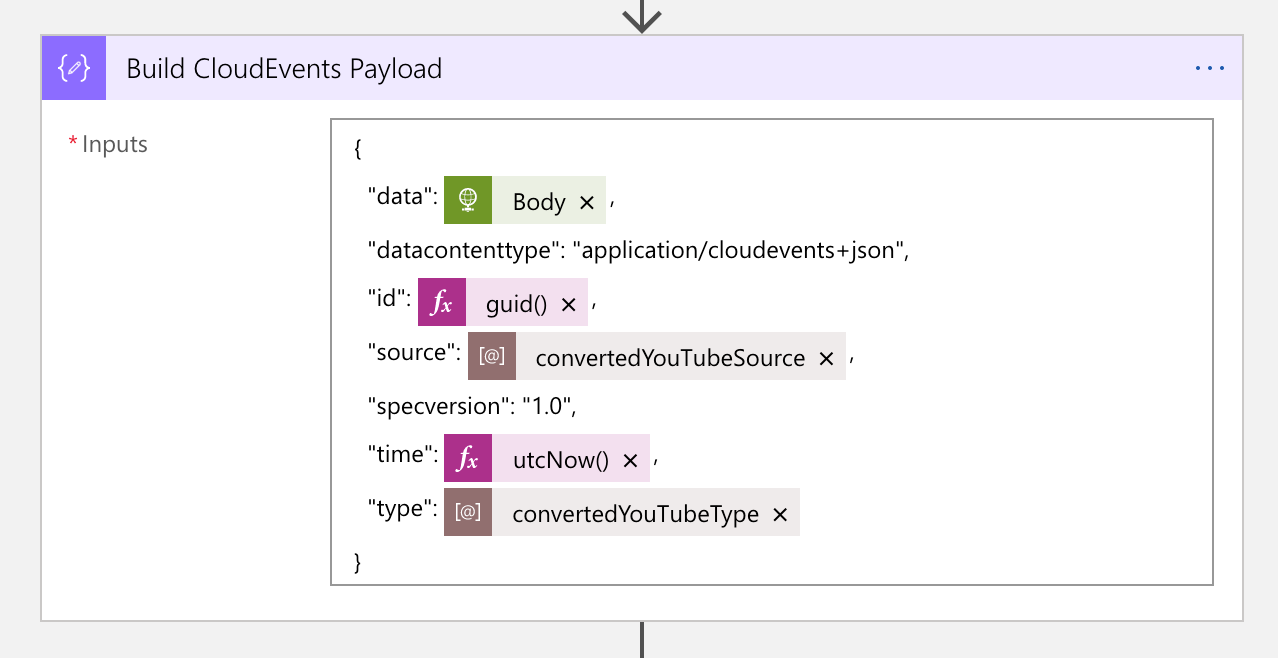

1. Event Publish: Converted YouTube Video Details

In order to publish the converted YouTube video details to Azure EventGrid, the data needs to be wrapped with the CloudEvents format. The screenshot shows the action on how to wrap the video details data with CloudEvents. This time, the event type will be com.youtube.video.converted.

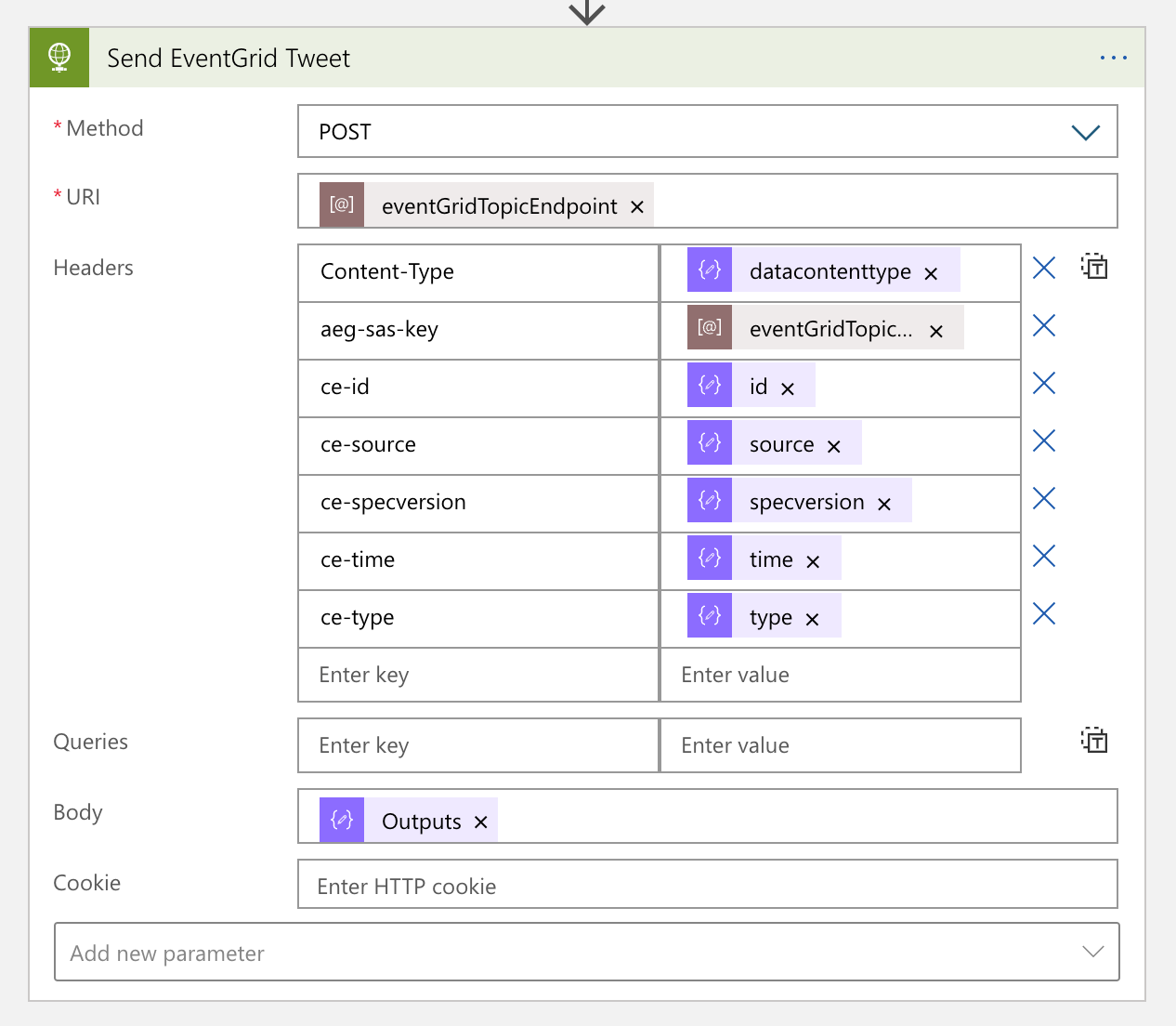

The next action is to send an HTTP request to Azure EventGrid, with the CloudEvents payload. You can notice that many metadata headers are starting with ce-, defined in the cross reference check spec over HTTP.

The message handler now completes its workflow. From now on, each social media handler takes care of the new event data.

2. Event Handlers: Social Media Amplification

YouTube video details are now ready for amplification! Each social media handler takes care of the event data by adapting their circumstances. The event data received from EventGrid looks like this:

| { | |

| "id": "4cee6312-6584-462f-a8c0-c3d5d0cbfcb1", | |

| "specversion": "1.0", | |

| "source": "https://www.youtube.com/xml/feeds/videos.xml?channel_id=<channel_id>", | |

| "type": "com.youtube.video.converted", | |

| "time": "2021-01-16T05:21:23.9068402Z", | |

| "datacontenttype": "application/cloudevents+json", | |

| "data": { | |

| "channelId": "<channel_id>", | |

| "videoId": "<video_id>", | |

| "title": "hello world", | |

| "description": "Lorem ipsum dolor sit amet, consectetur adipiscing elit. Duis malesuada.", | |

| "link": "https://www.youtube.com/watch?v=<video_id>", | |

| "thumbnailLink": "https://i.ytimg.com/vi/<video_id>/maxresdefault.jpg", | |

| "datePublished": "2021-01-27T07:00:00+00:00", | |

| "dateUpdated": "2021-01-27T07:00:00+00:00" | |

| } | |

| } |

Twitter Amplification

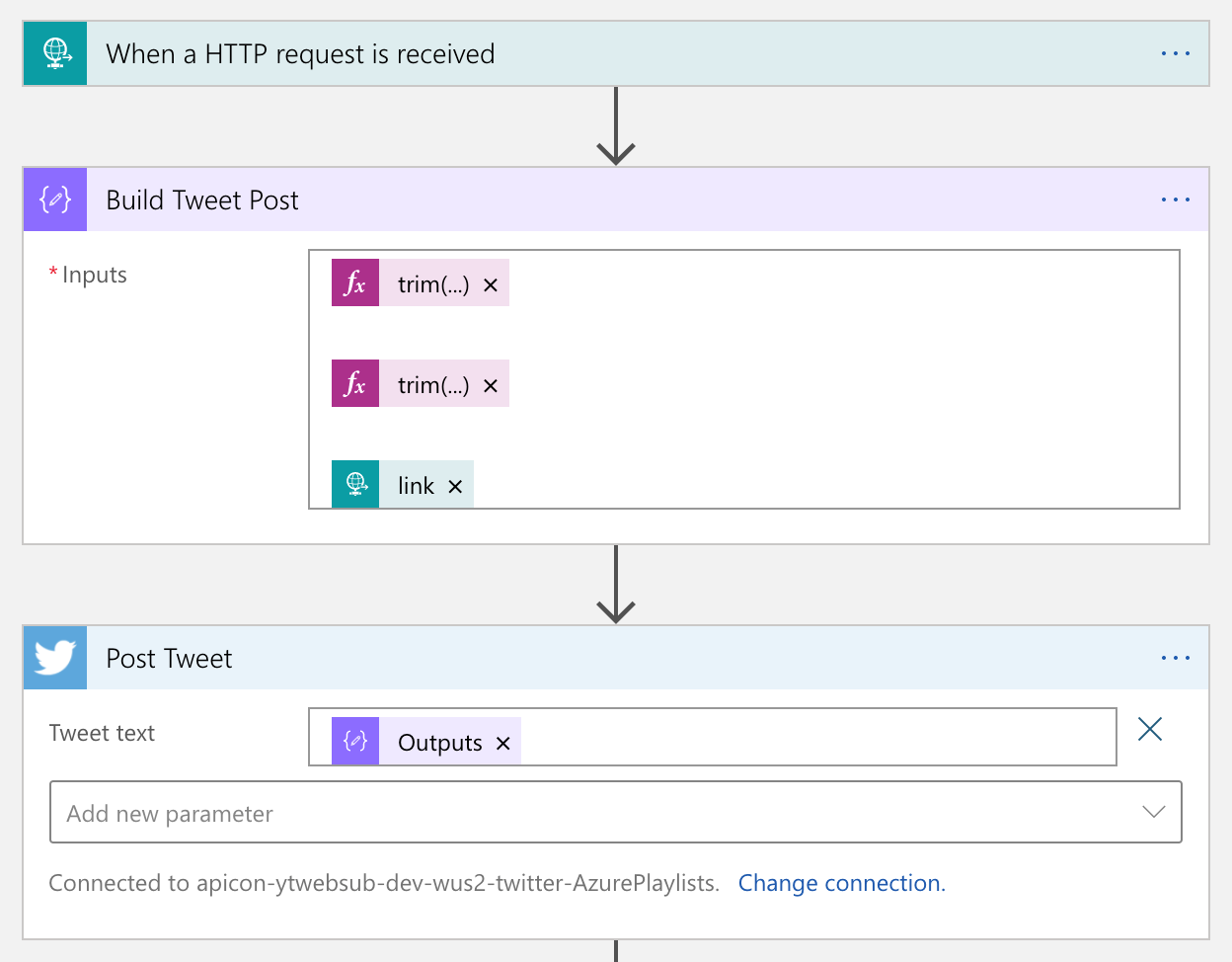

As Logic Apps provides the Twitter connector out-of-the-box, we don't need to use the API by ourselves. Therefore, simply use the actions like below:

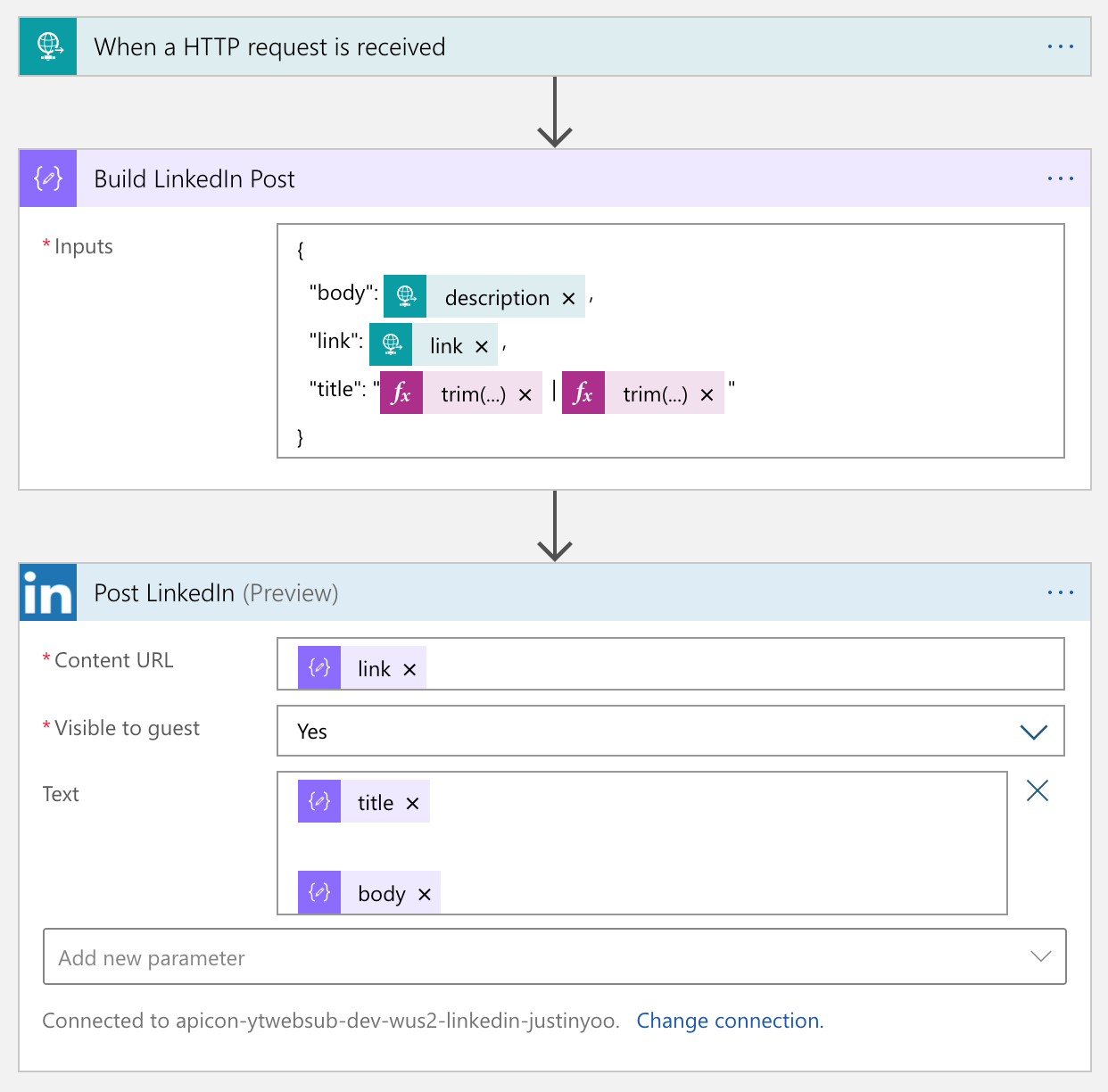

Logic Apps also provides a built-in LinkedIn connector. So, simply we use it.

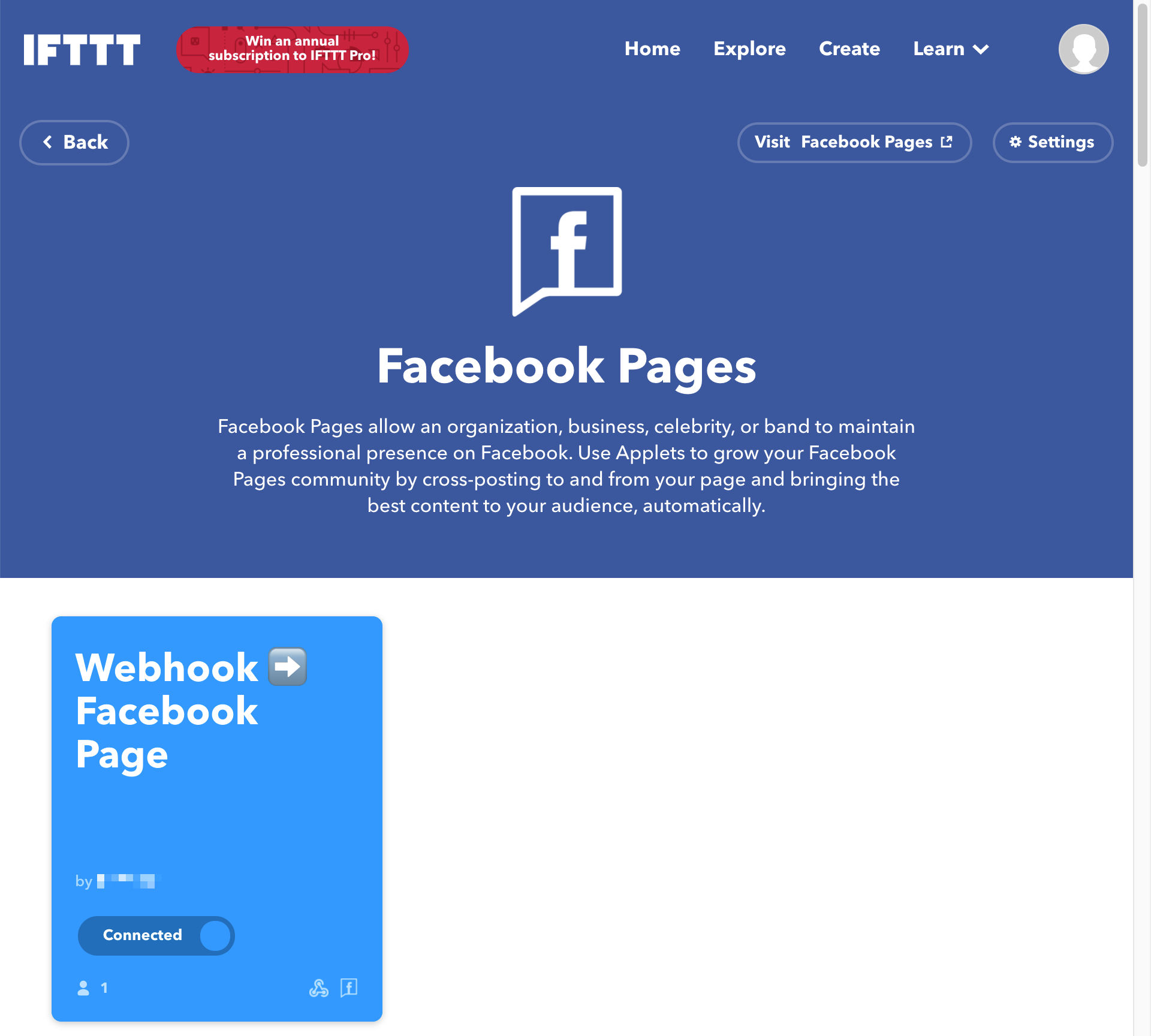

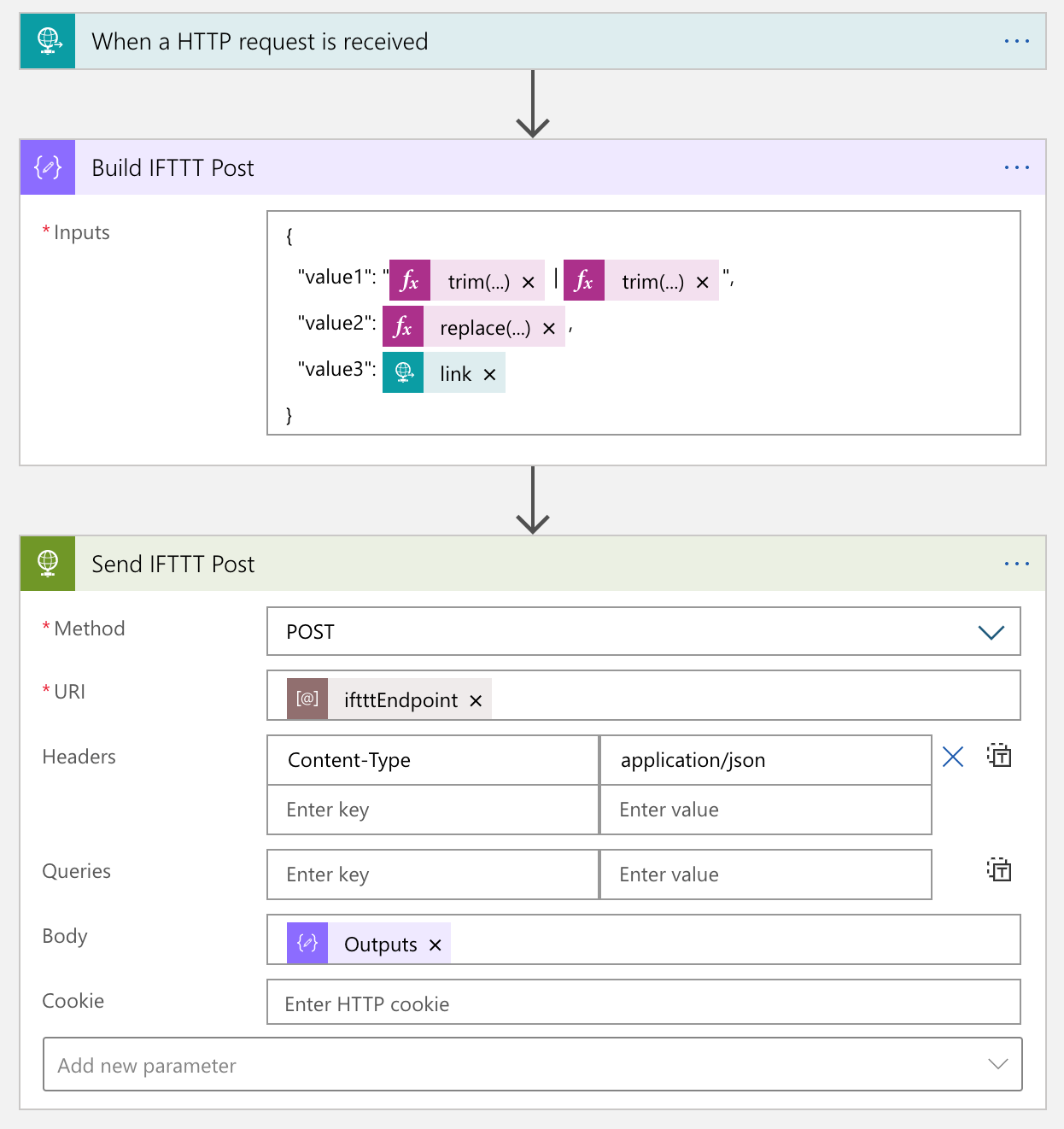

Unlike the other two connectors, the Facebook connector has been deprecated. Instead, it's now become an open-source project. So, we should use this open-sourced custom connector or something else. Fortunately IFTTT provides the Facebook Page connector, so we just use it.

From the Logic App point of view, calling IFTTT is just another HTTP call. So it's not that tricky. The only thing to remember is that the request payload can only include no more than value, value2 and value3.

The actual process result in the IFTTT end looks like this:

We've amplified to social media of Twitter, LinkedIn and Facebook.

If you want to add another social media, you can simply add another Logic App as the event handler.

So far, we've implemented a workflow solution that posts to designated social media platform when a new YouTube video update is notified through WebSub, by using CloudEvents, Azure EventGrid, Azure Functions and Azure Logic Apps. As steps are all decoupled, we don't need to worry about the dependencies during the maintenance phase. In addition to that, although a new social media platform is planned to add, it wouldn't impact on the existing solution architecture.

If you or your organisation is planning online content marketing, it's worth building this sort of system by yourself. And it would be an excellent opportunity to make a well-decoupled and event-driven cloud solution architecture.